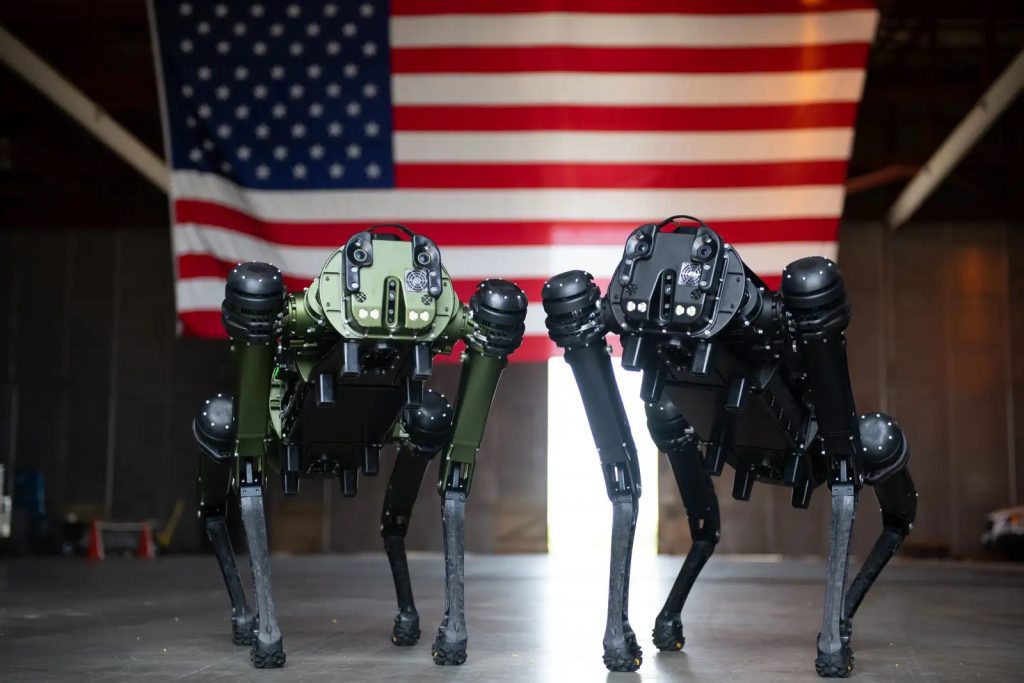

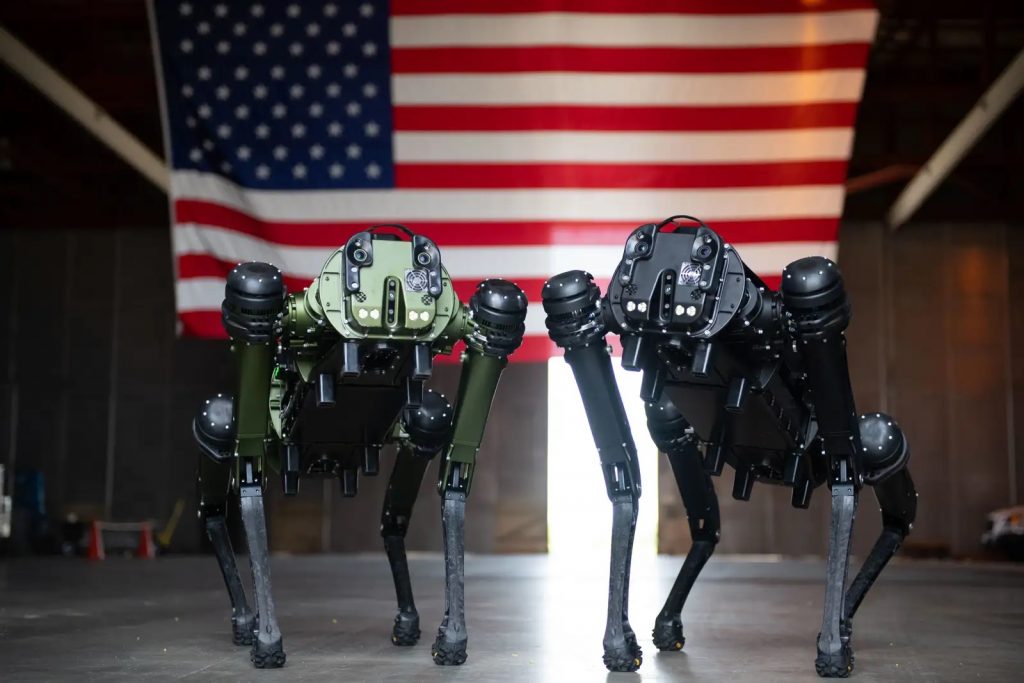

Ghost Robotics Vision 60 Q-UGV. US Space Force photo by Senior Airman Samuel Becker

By Toby Walsh (Professor of AI at UNSW, Research Group Leader, UNSW Sydney)

You might suppose Hollywood is good at predicting the future. Indeed, Robert Wallace, head of the CIA’s Office of Technical Service and the US equivalent of MI6’s fictional Q, has recounted how Russian spies would watch the latest Bond movie to see what technologies might be coming their way.

Hollywood’s continuing obsession with killer robots might therefore be of significant concern. The newest such movie is Apple TV’s forthcoming sex robot courtroom drama Dolly.

I never thought I’d write the phrase “sex robot courtroom drama”, but there you go. Based on a 2011 short story by Elizabeth Bear, the plot concerns a billionaire killed by a sex robot that then asks for a lawyer to defend its murderous actions.

The real killer robots

Dolly is the latest in a long line of movies featuring killer robots – including HAL in Kubrick’s 2001: A Space Odyssey, and Arnold Schwarzenegger’s T-800 robot in the Terminator series. Indeed, conflict between robots and humans was at the centre of the very first feature-length science fiction film, Fritz Lang’s 1927 classic Metropolis.

But almost all these movies get it wrong. Killer robots won’t be sentient humanoid robots with evil intent. This might make for a dramatic storyline and a box office success, but such technologies are many decades, if not centuries, away.

Indeed, contrary to recent fears, robots may never be sentient.

It’s much simpler technologies we should be worrying about. And these technologies are starting to turn up on the battlefield today in places like Ukraine and Nagorno-Karabakh.

A war transformed

Movies that feature much simpler armed drones, like Angel has Fallen (2019) and Eye in the Sky (2015), paint perhaps the most accurate picture of the real future of killer robots.

On the nightly TV news, we see how modern warfare is being transformed by ever-more autonomous drones, tanks, ships and submarines. These robots are only a little more sophisticated than those you can buy in your local hobby store.

And increasingly, the decisions to identify, track and destroy targets are being handed over to their algorithms.

This is taking the world to a dangerous place, with a host of moral, legal and technical problems. Such weapons will, for example, further upset our troubled geopolitical situation. We already see Turkey emerging as a major drone power.

And such weapons cross a moral red line into a terrible and terrifying world where unaccountable machines decide who lives and who dies.

Robot manufacturers are, however, starting to push back against this future.

A pledge not to weaponise

Last week, six leading robotics companies pledged they would never weaponise their robot platforms. The companies include Boston Dynamics, which makes the Atlas humanoid robot, which can perform an impressive backflip, and the Spot robot dog, which looks like it’s straight out of the Black Mirror TV series.

This isn’t the first time robotics companies have spoken out about this worrying future. Five years ago, I organised an open letter signed by Elon Musk and more than 100 founders of other AI and robot companies calling for the United Nations to regulate the use of killer robots. The letter even knocked the Pope into third place for a global disarmament award.

However, the fact that leading robotics companies are pledging not to weaponise their robot platforms is more virtue signalling than anything else.

We have, for example, already seen third parties mount guns on clones of Boston Dynamics’ Spot robot dog. And such modified robots have proven effective in action. Iran’s top nuclear scientist was assassinated by Israeli agents using a robot machine gun in 2020.

Collective action to safeguard our future

The only way we can safeguard against this terrifying future is if nations collectively take action, as they have with chemical weapons, biological weapons and even nuclear weapons.

Such regulation won’t be perfect, just as the regulation of chemical weapons isn’t perfect. But it will prevent arms companies from openly selling such weapons and thus their proliferation.

Therefore, it’s even more important than a pledge from robotics companies to see the UN Human Rights council has recently unanimously decided to explore the human rights implications of new and emerging technologies like autonomous weapons.

Several dozen nations have already called for the UN to regulate killer robots. The European Parliament, the African Union, the UN Secretary General, Nobel peace laureates, church leaders, politicians and thousands of AI and robotics researchers like myself have all called for regulation.

Australian is not a country that has, so far, supported these calls. But if you want to avoid this Hollywood future, you may want to take it up with your political representative next time you see them.

![]()

Toby Walsh does not work for, consult, own shares in or receive funding from any company or organisation that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

This article appeared in The Conversation.

tags: c-Military-Defense

The Conversation is an independent source of news and views, sourced from the academic and research community and delivered direct to the public.